AI agents are changing the way engineering teams plan, build, test, and scale software. For CTOs, engineering managers, startup founders, and enterprise technology leaders, the opportunity is no longer just about using AI as a chatbot. The real advantage comes from designing agentic systems that can reason, act, use tools, evaluate results, and improve software delivery workflows.

Why AI Agents Are the Next Shift in Software Engineering

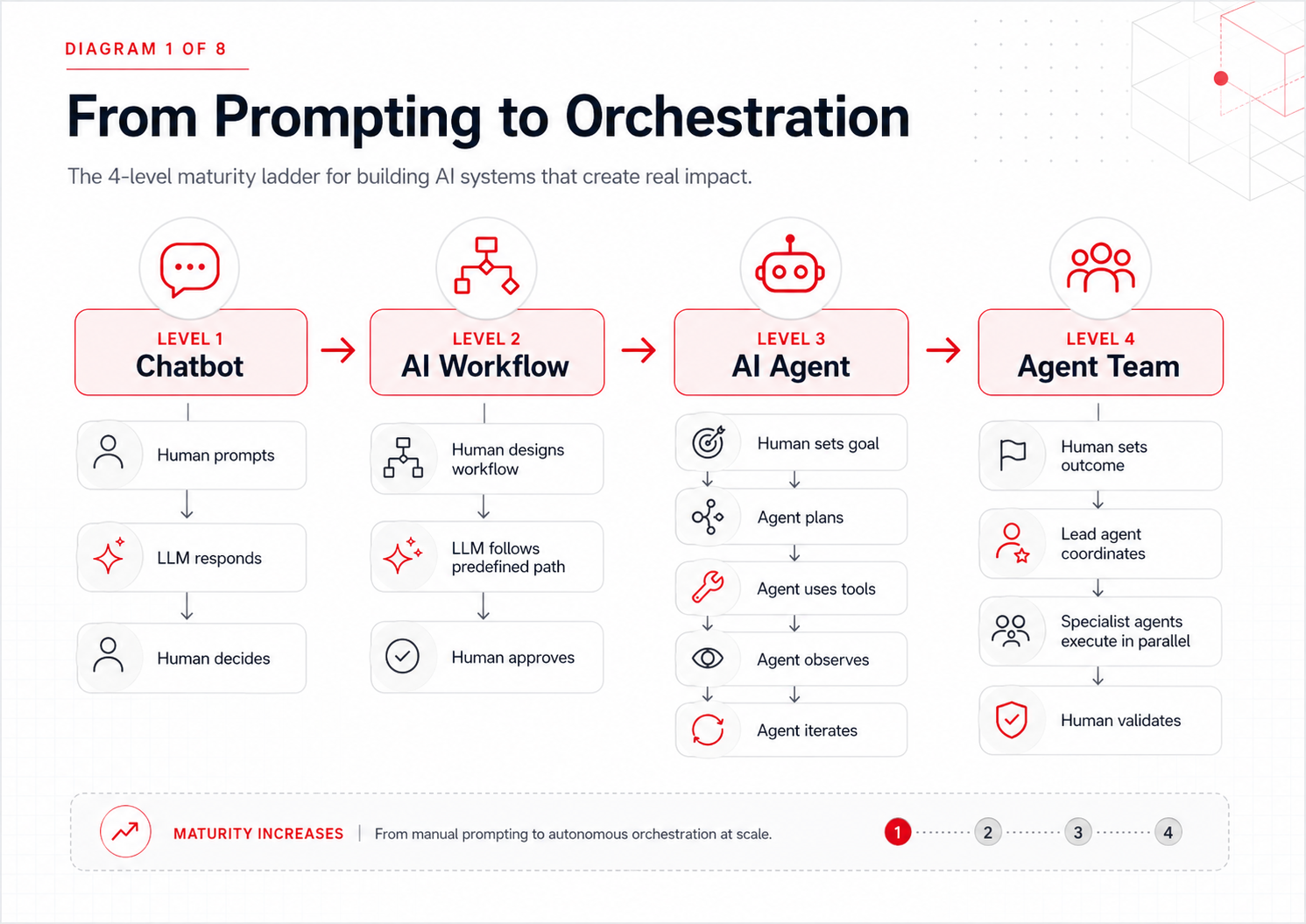

For the past few years, most companies have treated large language models as better chatbots: systems that wait for a prompt, generate a response, and depend on a human to decide what happens next.

That was the first phase of enterprise AI adoption.

Then came AI workflows. Teams started wrapping LLMs inside predefined processes such as Retrieval-Augmented Generation, support triage, documentation generation, code review, and internal knowledge search. These workflows were useful, but they still followed paths designed by humans. The model assisted, but the human or the software workflow remained the final decision-maker.

Now we are entering the next phase: AI agents.

An AI agent does not simply answer a question. It works toward a goal. Given an outcome, it can reason about what needs to happen, choose tools, execute steps, observe results, adjust its plan, and continue until the task is complete.

For engineering leaders, this is more than a tooling upgrade. It changes the basic unit of software work.

The developer’s role is shifting from writing every line of code to directing intelligent systems: defining outcomes, setting constraints, reviewing implementation, validating quality, and designing workflows where humans and agents collaborate effectively.

At MagmaLabs, this shift matters because clients do not just need access to new AI tools. They need practical, scalable, secure ways to operationalize them across real engineering teams. That requires collaboration, technical hunger, and a growth mindset—the same values that guide how high-performing software teams adopt any major platform shift.

Why AI Agents Matter for CTOs and Engineering Leaders

AI agents are especially relevant for teams under pressure to ship faster without lowering quality.

That includes startup founders building MVPs with limited technical capacity, scaling CTOs trying to accelerate roadmap execution, engineering managers balancing delivery speed with maintainability, and enterprise technology leaders managing security, compliance, and cost.

The shared challenge is not simply, “How do we use AI?” It is, “How do we use AI safely, productively, and in a way that improves engineering outcomes?”

The answer is not to give everyone a chatbot and hope productivity improves.

The answer is to design agentic engineering systems—and that is exactly the kind of work our custom software development teams help clients put into production.

1. AI Agents Start With Context Engineering

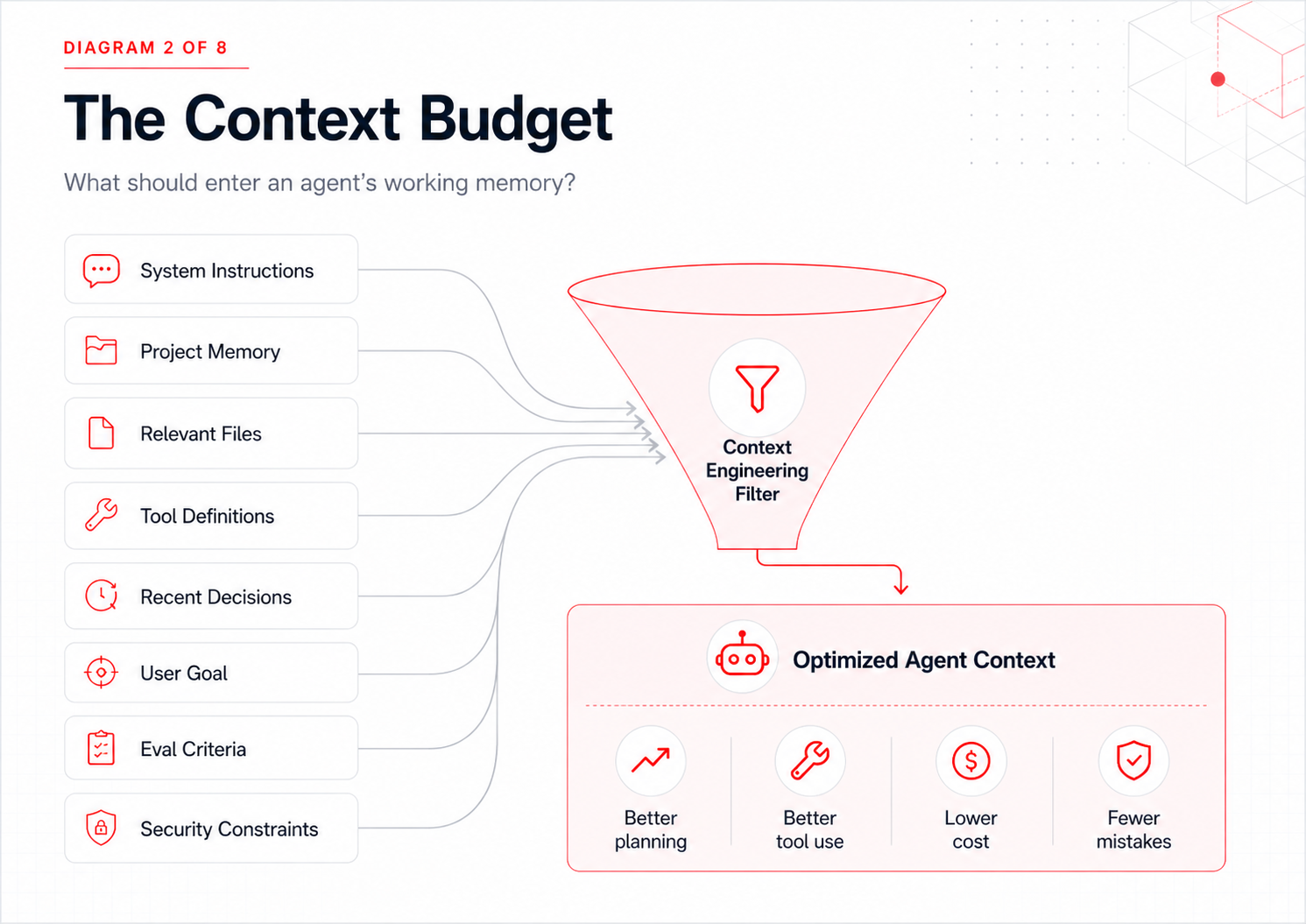

Before building advanced agents, engineering teams need to understand one uncomfortable truth: context is not free.

Every time an LLM processes a request, it works with the information placed in its context window. Long context windows are powerful, but they are not a reason to dump everything into the model.

This is why modern AI teams are moving from prompt engineering to context engineering.

Prompt engineering asks, “How do we phrase the instruction?”

Context engineering asks, “What information, memory, tools, constraints, and intermediate results should the agent have at this exact moment?”

Anthropic defines context engineering as the practice of curating and maintaining the optimal set of tokens during inference, including instructions, tools, memory, external data, and other information that shapes model behavior.1

That distinction is critical.

A larger context window should be treated as an insurance policy, not a dumping ground. The more irrelevant information the model sees, the more likely it is to become distracted, miss important details, or make poor tradeoffs.

Practical Context Engineering Patterns for AI Agents

Manual compaction

Instead of letting a session grow until the model becomes overloaded, periodically ask the agent to summarize architectural decisions, unresolved questions, active tasks, and constraints. Then restart with a clean context.

Persistent memory

For engineering workflows, a lightweight MEMORY.md, ARCHITECTURE.md, or DECISIONS.md file can help agents retain important codebase conventions across sessions without forcing every historical conversation back into context.

Just-in-time retrieval

Rather than loading every file, ticket, database schema, API document, and tool definition upfront, give the agent references it can inspect only when needed.

For CTOs and engineering managers, the lesson is simple: the smartest agent architecture is not the one with the largest context window. It is the one that gives the model the right context at the right time. This is the same discipline we apply across our AI engineering services.

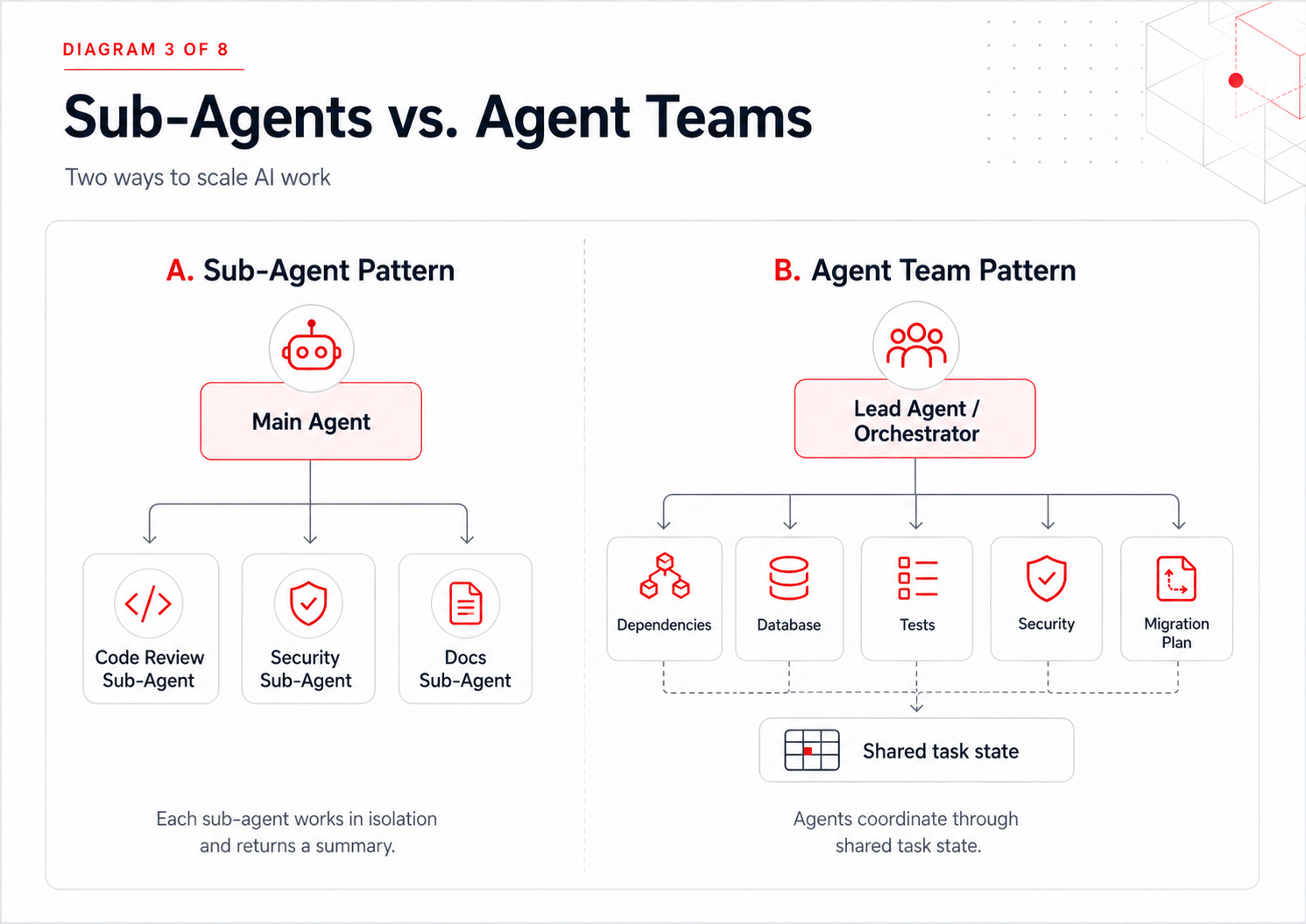

2. Sub-Agents vs. Agent Teams: Two Ways to Scale AI Agents

As software tasks become more complex, a single agent can become overloaded. The solution is not always “use a bigger model.” Often, the answer is to divide the work.

There are two important patterns here: sub-agents and agent teams.

Sub-Agents: Isolated Specialist AI Agents

A sub-agent is like a focused specialist. The main agent delegates a task, the sub-agent works in an isolated context, and then it returns a clean summary.

This is useful when you want separation of concerns.

For example, a main coding agent could ask a reviewer sub-agent to inspect a pull request. That reviewer sub-agent might only have permission to read files and search the codebase. It cannot edit code. That constraint turns the sub-agent into a safer, more reliable reviewer.

This architecture is powerful because constraints become a design tool.

You can create:

- A security review sub-agent

- A test-generation sub-agent

- A documentation sub-agent

- A migration-planning sub-agent

- A dependency-audit sub-agent

- A performance-analysis sub-agent

Each can have its own model, tools, permissions, and context.

This also enables cost-aware routing. A faster, lower-cost model can handle documentation lookup or repetitive analysis, while a more capable model is reserved for complex architecture or production-critical reasoning.

Multi-Agent Teams: Coordinated Parallel AI Agents

Agent teams are different. Instead of one main agent delegating isolated tasks, a lead agent coordinates multiple agents working in parallel.

This is useful for large research, migration, refactoring, or analysis tasks where different streams of work can happen simultaneously.

Anthropic describes multi-agent systems where a lead agent decomposes work into subtasks and delegates those subtasks to subagents, each with its own objective, output format, tool guidance, and boundaries.2

For software teams, the opportunity is clear: agent teams can compress work that usually requires long sequential cycles.

A migration discovery process, for example, could involve:

- One agent mapping dependencies

- One agent reviewing database usage

- One agent identifying deprecated APIs

- One agent scanning tests

- One agent drafting the migration plan

- One agent checking security and compliance risks

The human engineering lead then reviews the synthesized output instead of manually coordinating every discovery task. When clients need extra hands to design and run these workflows in real codebases, our staff augmentation teams plug in alongside internal engineering to keep velocity high.

This does not remove engineering judgment. It changes where engineering judgment is applied.

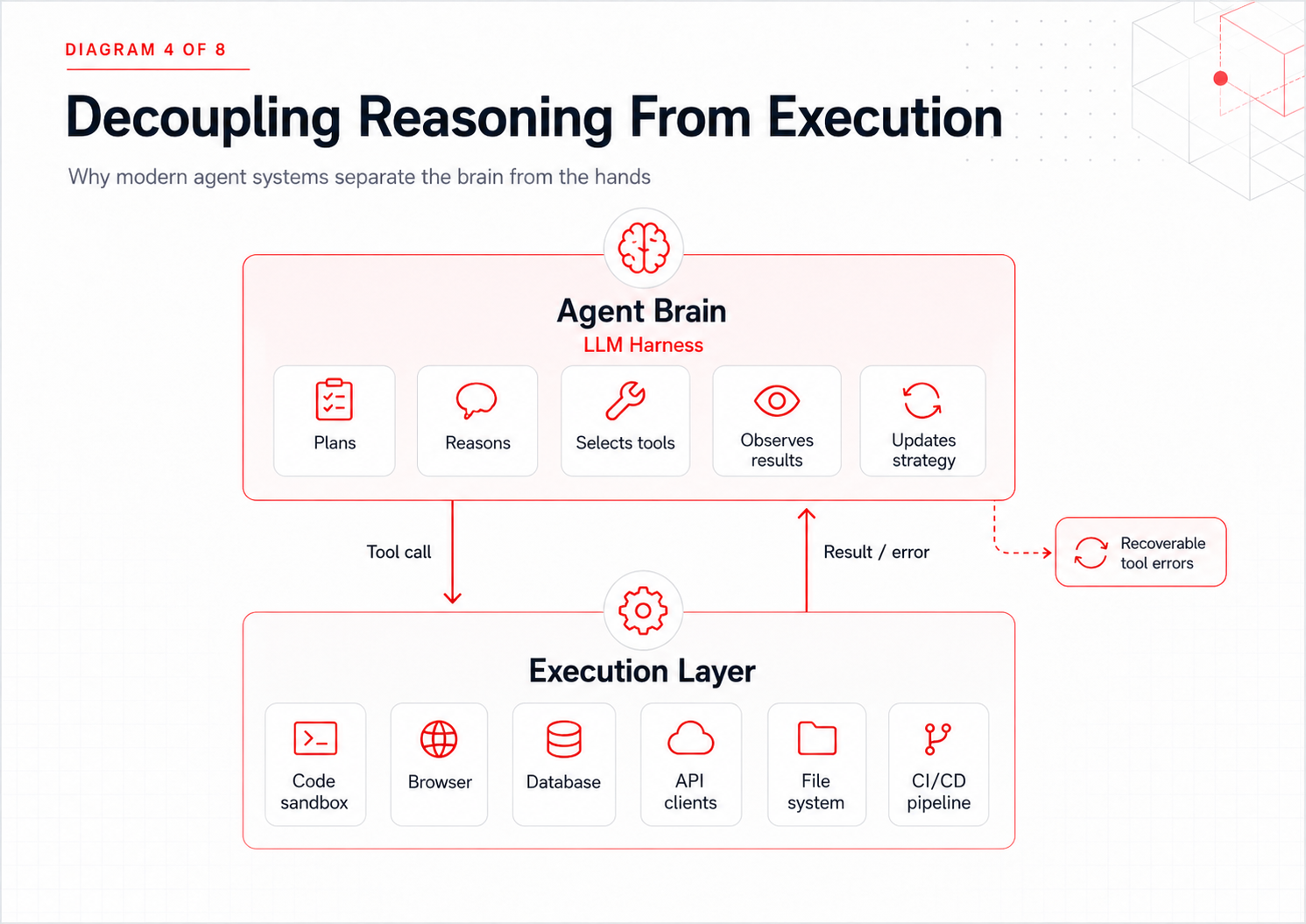

3. Decoupling the Brain From the Hands in Autonomous AI Agents

Early agent systems often bundled everything together: the model harness, the execution environment, memory, and tool access all lived in one fragile runtime.

That architecture is risky.

If the execution environment crashes, the session can be lost. If a tool call fails, the agent may lose state. If the sandbox becomes slow, the whole system becomes slow.

Modern agent architecture separates the “brain” from the “hands.”

The brain is the LLM harness: planning, reasoning, deciding, and observing.

The hands are the external tools and execution environments: sandboxes, terminals, APIs, databases, browsers, file systems, and deployment systems.

When these are decoupled, a failed execution environment becomes a recoverable tool error instead of a full session failure. The agent can observe the failure, provision a new environment, retry, or choose a different path.

For enterprise CTOs, this separation matters because it supports reliability, security, auditability, and operational resilience. Those are the same concerns that already shape cloud architecture, DevOps, and platform engineering.

Agents should be treated less like magical assistants and more like distributed systems.

4. MCP and Agent-Ergonomic Tools for AI Agents

Agents are only as useful as the tools they can operate.

That is why the Model Context Protocol, tool discovery, and agent-oriented interface design are becoming important parts of modern AI engineering.

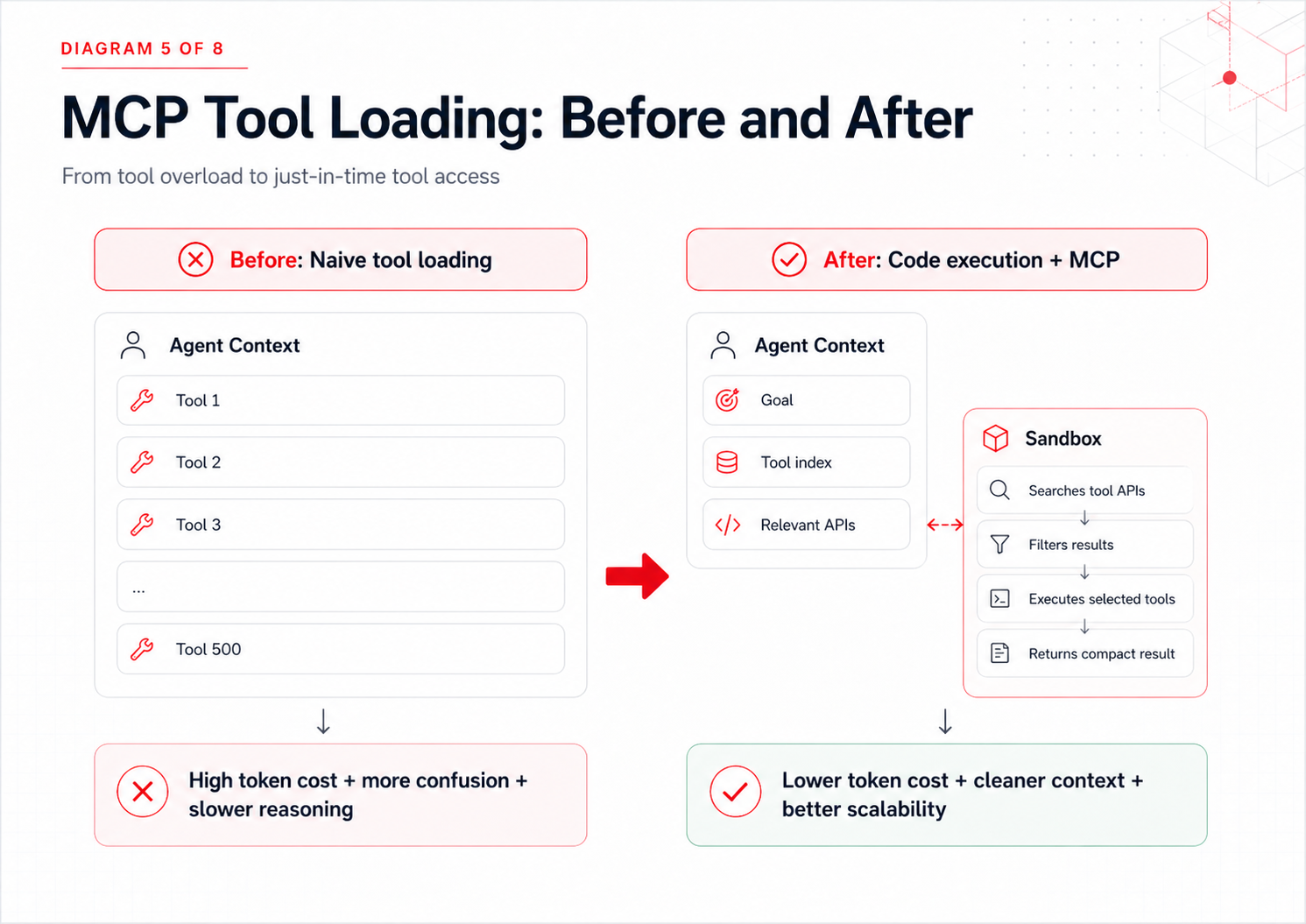

Why Tool Loading Becomes Expensive for AI Agents

A naive agent architecture loads every available tool definition into the model’s context window. That works for small demos. It fails at scale.

If an enterprise agent has access to Jira, GitHub, Slack, Salesforce, Google Drive, AWS, Datadog, Linear, Notion, internal APIs, and production dashboards, loading every tool definition upfront becomes slow, expensive, and confusing.

Anthropic has described a code execution approach with MCP where agents can interact with MCP servers more efficiently while using fewer tokens. In the example described, code execution reduced token usage from roughly 150,000 tokens to 2,000 tokens.3

That pattern is important because it treats the agent less like a chatbot and more like a software operator that can inspect, filter, and execute tools just in time.

What Makes a Tool Agent-Friendly?

Engineering teams need to design tools for non-deterministic agents, not just deterministic software.

A human can often infer what a vague API does. An agent may not.

Good agent tools should be:

Workflow-oriented

Instead of exposing five low-level tools, expose one tool that completes a meaningful workflow. For example, schedule_event is easier for an agent than separate list_calendars, check_availability, create_event, and send_invite calls.

Clearly namespaced

Tools like jira_search_issues, github_search_prs, and asana_search_tasks are easier to distinguish than three generic tools all named search.

Semantically rich

Agents reason better with meaningful names, file types, descriptions, statuses, and relationships than with cryptic IDs.

Permission-aware

A production deployment tool should not be available to every agent by default. Agents need the same permission boundaries we expect from human operators.

This is where MagmaLabs’ engineering discipline becomes especially relevant. Building agentic systems is not only about connecting APIs—it is about designing safe, ergonomic workflows that fit the way real teams build, review, ship, and maintain software. That mindset is at the core of our AI development services.

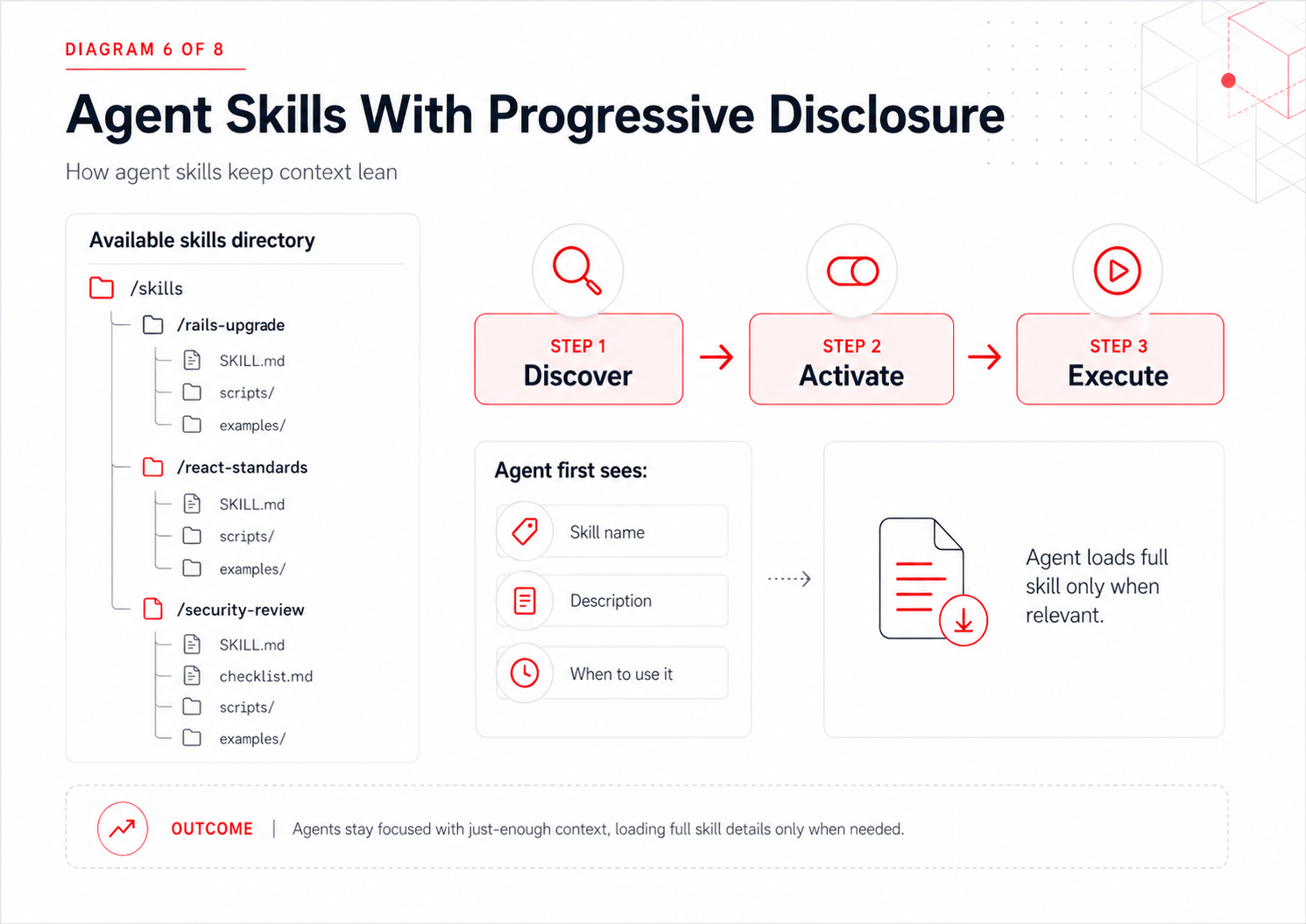

5. Agent Skills and Progressive Disclosure for AI Agents

As agents become more capable, teams will want to give them specialized knowledge: coding standards, deployment rules, brand guidelines, compliance requirements, architecture preferences, and domain-specific playbooks.

The challenge is that loading all of this into every prompt creates context bloat.

A better pattern is progressive disclosure.

Anthropic describes Agent Skills as folders containing instructions, scripts, and resources that agents can load only when relevant. Progressive disclosure is the core design principle: the agent first sees high-level metadata, then loads deeper instructions and resources when needed.4

For software teams, this opens the door to reusable organizational intelligence.

A company could maintain skills such as:

- Rails upgrade skill

- React component standards skill

- Shopify integration skill

- AWS incident response skill

- HIPAA-aware development skill

- FinTech compliance review skill

- Pull request review skill

- Test coverage improvement skill

Instead of relying on individual engineers to remember every convention, teams can encode repeatable expertise into reusable agent skills.

That is not just automation. It is knowledge transfer.

And for scaling startups or enterprise teams struggling with onboarding, consistency, and quality, this can become a major competitive advantage—similar to the leverage we have seen building MVPs and scalable products for early-stage and growth-stage companies.

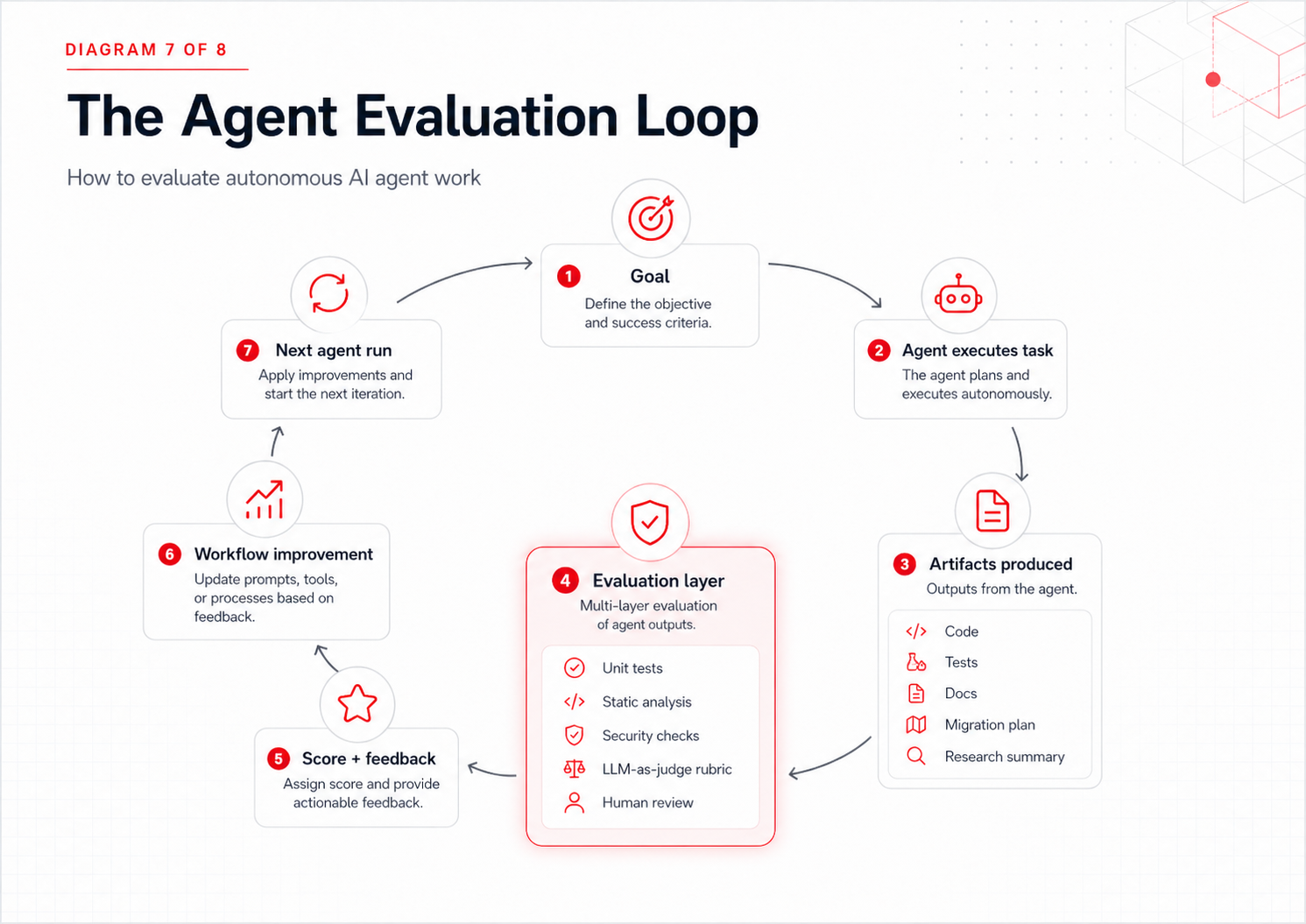

6. AI Agent Evaluation: The Only Safe Way to Scale Autonomous Work

Traditional software is tested by checking whether known inputs produce expected outputs.

Agents are different.

They are non-deterministic. They may take different paths to reach the same goal. One successful run does not guarantee the next run will behave the same way.

That means teams need to evaluate outcomes, not just steps.

This is where agent evals become essential.

What Should AI Agent Evals Measure?

For software engineering agents, evals may include:

- Did the code compile?

- Did the tests pass?

- Did the agent preserve existing behavior?

- Did it follow architectural constraints?

- Did it introduce security risks?

- Did it produce readable, maintainable code?

- Did it document important changes?

- Did it avoid touching restricted files?

- Did it complete the task within acceptable cost and time?

Some of these can be graded with deterministic code-based checks. Others require model-based graders using strict rubrics.

The best systems use both.

pass@k vs. pass^k for AI Agent Evaluation

Agent evaluation also requires the right success metric.

pass@k measures whether an agent can get the correct result in at least one of several attempts. This is useful for coding tasks where multiple attempts are acceptable and a human reviews the final output.

pass^k measures whether the agent succeeds consistently across multiple attempts. This matters for customer-facing workflows, compliance-sensitive tasks, or production operations where one failure can be expensive.

For MagmaLabs clients, this distinction matters because different businesses have different risk profiles.

An MVP founder may accept a human-reviewed agent workflow that accelerates prototyping. An enterprise FinTech director needs repeatability, auditability, and stronger controls before agentic automation touches critical systems. We have seen this tradeoff play out across multiple industries our teams support, from FinTech to e-commerce.

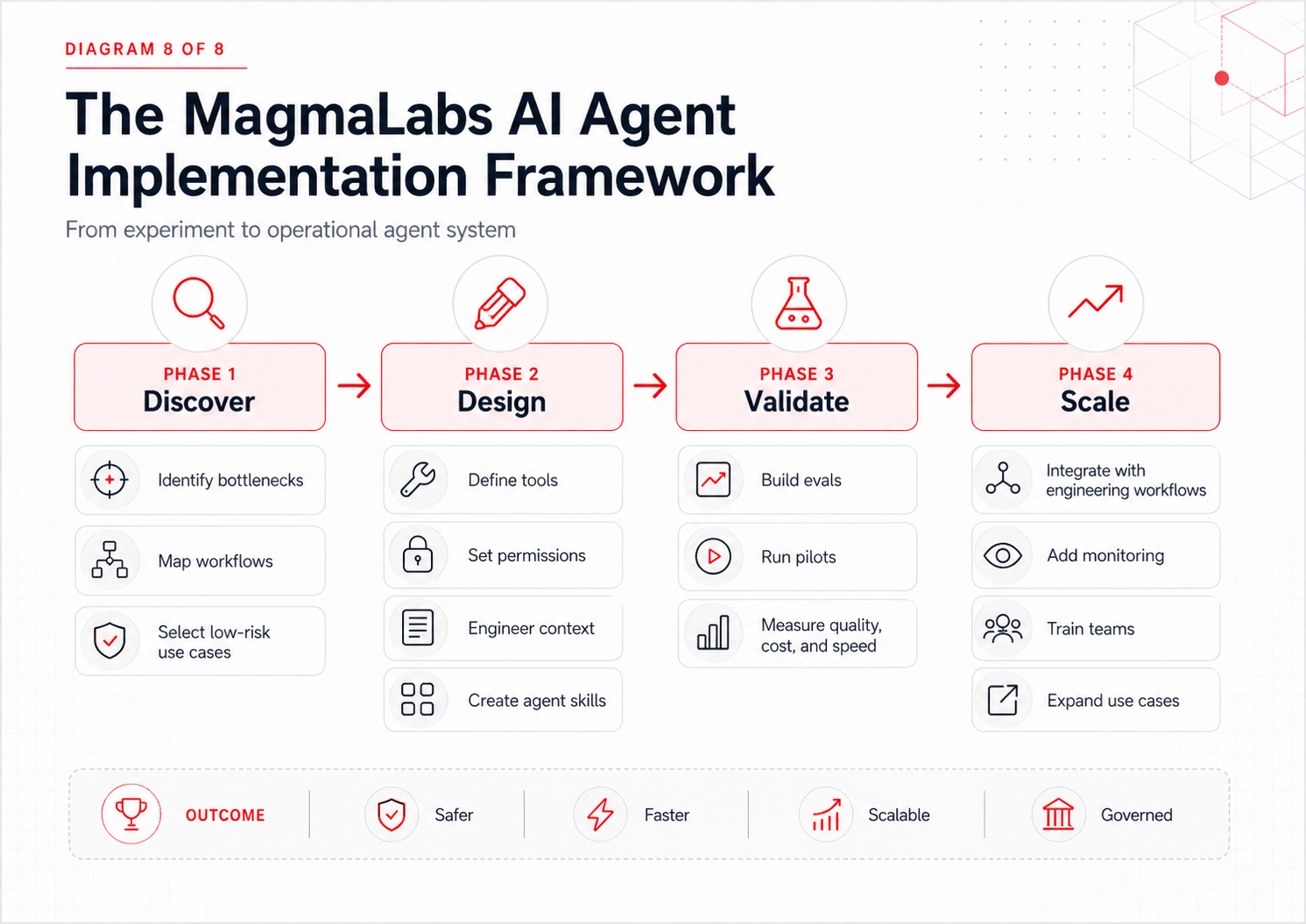

How MagmaLabs Approaches AI Agent Implementation

AI agents should not be adopted as isolated experiments. They should be implemented as part of a broader engineering strategy.

At MagmaLabs, the practical approach starts with three questions.

1. Where Can AI Agents Create Measurable Engineering Leverage?

The best first use cases are not always the flashiest. Strong candidates often include:

- Codebase discovery

- Test generation

- Documentation maintenance

- Pull request review

- Dependency analysis

- Data migration planning

- Support workflow automation

- Internal knowledge retrieval

- QA and regression support

These workflows are valuable because they reduce bottlenecks without immediately handing over high-risk production authority. If you want to see how we have helped other teams operationalize them, our engineering blog covers concrete patterns and case studies.

2. What Constraints Must AI Agents Follow?

Good agentic systems are not unconstrained. They need clear boundaries.

Those boundaries may include:

- Read-only access for reviewer agents

- Approval gates before code changes

- Restricted access to production systems

- Cost ceilings for long-running workflows

- Required test execution before completion

- Human review for security-sensitive tasks

- Audit trails for enterprise environments

For large and XL enterprise buyers, these constraints are essential because compliance, vendor stability, security, and scalability are core buying concerns.

3. How Will AI Agent Success Be Evaluated?

Before scaling agent workflows, teams need to define what “good” means.

For a code review agent, success may mean identifying real issues without overwhelming developers with noise.

For a test-generation agent, success may mean improving coverage while producing maintainable tests.

For a migration-planning agent, success may mean identifying dependencies, risks, and sequencing issues before engineering work begins.

Without evals, teams are only guessing.

With evals, they can improve agent performance systematically.

What AI Agents Mean for Engineering Leaders

AI agents will not eliminate the need for strong engineering teams.

They will raise the bar for what strong engineering teams can accomplish.

The teams that benefit most will be the ones that learn how to:

- Break large goals into agent-ready tasks

- Design safe tool boundaries

- Maintain clean context

- Use sub-agents and agent teams appropriately

- Build reusable skills

- Evaluate outcomes rigorously

- Keep humans in the loop where judgment matters

This is especially important for companies under pressure to move faster without sacrificing quality.

For early-stage founders, agents can accelerate MVP discovery, prototyping, and documentation.

For scaling CTOs, agents can reduce bottlenecks in testing, refactoring, and feature delivery.

For engineering managers, agents can help extend team capacity without losing visibility.

For enterprise technology leaders, agents can support automation while preserving security, compliance, and operational discipline.

These are exactly the kinds of tradeoffs MagmaLabs helps clients navigate: speed versus quality, flexibility versus governance, innovation versus maintainability. See how we have helped other teams ship faster without compromising engineering discipline.

FAQ: AI Agents in Software Engineering

What is an AI agent?

An AI agent is a system that can work toward a goal by reasoning, choosing tools, taking actions, observing results, and adjusting its behavior. Unlike a chatbot, it does not only respond to prompts. It can execute multi-step workflows.

How are AI agents different from AI workflows?

AI workflows usually follow predefined paths designed by humans. AI agents are more dynamic. They can decide which steps to take based on the goal, available tools, and intermediate results.

Are AI agents safe for production software teams?

They can be, but only with proper constraints. Production-ready agentic systems need permission boundaries, human review, tool restrictions, logging, evals, and rollback strategies.

What are sub-agents?

Sub-agents are specialized agents that work on focused tasks in isolated contexts. They are useful for code review, documentation, security analysis, test generation, and research.

What are multi-agent systems?

Multi-agent systems use several agents working together, often coordinated by a lead agent. They are useful for complex tasks that can be split into parallel workstreams.

Why do AI agents need evals?

Agents are non-deterministic. They may produce different results across runs. Evals help teams measure whether agents are producing reliable, safe, and useful outcomes.

The Future of Software Delivery: Orchestrating AI Agents

The next era of AI in software development will not be defined by who has access to the most powerful model.

It will be defined by who knows how to operationalize agents.

That means designing the right workflows, constraints, tools, memory systems, review loops, and evaluation frameworks.

The future is not human versus AI.

It is engineering teams that know how to orchestrate intelligent agents versus teams that only know how to chat with them.

And that is where the real advantage begins.

For organizations ready to move beyond experimentation, AI agents offer a path to faster delivery, smarter engineering operations, and more scalable technical execution.

But like every meaningful technology shift, success will not come from hype. It will come from disciplined implementation, collaborative teams, passionate builders, and a growth mindset.

That is the work ahead.

And it is exactly the kind of work MagmaLabs is built for.

Ready to Explore How AI Agents Can Improve Your Engineering Workflows?

MagmaLabs helps startups, scale-ups, and enterprise teams design practical, secure, and scalable software systems. Let’s identify where agentic workflows can create the most value in your product and engineering organization.